Every few months, a new story surfaces about a company that spent a year and several million dollars trying to add AI to their product and has nothing to show for it. The pilot was shelved. The vendor blamed the data. The data team blamed the architecture. The architecture team blamed the vendor. The product ships without the AI feature, and everyone quietly agrees not to bring it up again.

These stories have become so common that some companies have started using them as justification for not trying at all. "We'll wait until AI matures a bit more." What they mean is: "We've seen how this goes and we don't want it to go that way for us."

The problem is, the companies that are waiting are watching their competitors pull ahead in ways that are going to be very hard to close. And the failure stories, as painful as they are, almost never happened because AI wasn't ready. They happened because the approach was wrong from the start.

The $12 Million Lesson Nobody Talks About

There's a Fortune 500 retailer whose story has been making the rounds in engineering circles. They spent $12 million and 18 months trying to add AI-powered demand forecasting to their inventory system, a system built in 2008, running Oracle, connected to 47 different data sources across warehouses, stores, and suppliers.

Fourteen of those eighteen months were spent not on AI at all, but on getting their data into a format the models could actually process. The inventory data lived in 47 different formats: Oracle tables, SQL Server databases, flat files, custom COBOL outputs. By the time they had cleaned enough of it to run predictions, the outputs conflicted with the legacy system's business rules and triggered cascading errors across the platform. The pilot was quietly shelved.

While this was happening, a four-year-old competitor built their entire inventory system around machine learning from day one. No legacy formats. No 47 integrations. No inherited mess. They undercut the retailer by 30% and took market share in three regions.

The retailer's mistake wasn't trying to add AI. It was treating AI as a feature to be added rather than a capability that the entire data architecture needed to support. By the time they understood the real scope of the problem, they had already spent the budget.

The Question Everyone Asks Last (That Should Be Asked First)

The most common misconception I encounter is that adding AI to an existing product is fundamentally a question of choosing the right model. GPT-4 or Claude? Fine-tune or use RAG? On-device or cloud inference?

These are real questions, but they are the last questions you should be asking, not the first. The first question is always: where does your data live, and what shape is it in?

This is unglamorous work. Nobody gets excited about data audits. But without it, you are building on sand. AI models learn from data, produce outputs based on data, and fail in proportion to how messy, inconsistent or incomplete that data is. A model that performs beautifully on your clean test set will behave unpredictably on the real data flowing through your production system, because real data is always messier than you expect.

Common findings from a data audit: timestamps are inconsistent across systems. User IDs don't match between databases. Key fields are null far more often than anyone realised. Historical data was captured for reporting, not for training. None of these are fatal problems. But all of them need addressing before you build anything on top. Teams that skip this step discover it six months into development, at which point the cost of fixing it has compounded significantly.

Once you know what you are working with, the next question is: what are you actually trying to achieve? Not "add AI to the product." Something precise. "Reduce the time our construction site managers spend on daily labour reporting by 40%." Or: "Increase the percentage of users who engage with the recommendation feature from 12% to 35% within 90 days."

A precise goal forces clarity on everything downstream. What use case are you solving? What data do you need? What does success look like in production, not just in testing? Without this, you will build something that performs well in a demo and delivers nothing in the real world.

Three Patterns That Actually Work

There are three patterns we have seen work consistently when adding AI to existing products.

AI as a decision layer. Your existing system handles the core workflow. AI sits alongside it, analysing data and surfacing recommendations, but not replacing the logic that keeps things running. The system keeps doing what it does. The AI adds a new dimension of insight on top of it.

Take Eyrus, a construction workforce intelligence platform we have been building with since 2021. Their platform already handled worker check-ins, time tracking, and site access management for some of the largest construction projects in the US, covering over $250 billion in project value. The question of how to add AI wasn't "what can AI do for construction?" It was specific: how do we help project managers spot schedule risks before they become schedule disasters? That precise framing led to a precise solution, connecting real workforce deployment data to project schedules and surfacing deviations before they compound. The intelligence layer made the existing platform significantly more valuable without touching the core systems that project teams already depended on.

AI as a personalisation engine. Your existing product already delivers an experience. AI makes that experience adaptive, responding to individual user behaviour and context in ways a static product cannot. Precision Pro Golf's rangefinder app illustrates this cleanly. The core product, accurate distance measurement, was already working and already trusted by golfers. The AI layer added caddie-like club recommendations, analysing distance to the pin, elevation change, wind conditions, and a golfer's own historical shot data to suggest the right club. The fundamental product didn't change. The experience became significantly more useful.

AI as a hardware-software bridge. This pattern is specific to connected and IoT products. Physical hardware already exists, already works, already has users. AI connects it to richer software experiences. SkinCeuticals' Custom D.O.S.E. machine is the best example we have been involved with directly. The hardware, a precision serum dispenser used in dermatology clinics, mixes and dispenses personalised skincare formulations from 24 individual ingredients. The AI layer, running through an Android tablet application, communicates with the machine over BLE, manages the dispensing mechanism, and customises formulations based on each patient's specific skin profile. The machine didn't change. The intelligence layer transformed it from a piece of hardware into a personalised skincare system. TIME named it one of the Best Inventions of 2019.

L'Oréal's Brow Magic device followed the same pattern: an AR-powered iOS app using ARKit, ModiFace's computer vision SDK, and BLE to bridge a physical brow applicator and a virtual try-on experience. CES Innovation Award, 2023.

In all three cases, nothing was rebuilt from scratch. The AI was layered onto what already existed, carefully, incrementally, with the existing product staying live throughout.

What the Integration Layer Needs to Get Right

The integration layer, the code that sits between your existing system and the new AI capability, is where most technical debt accumulates and where most teams underinvest.

A few things that need to be true about it. It needs to be asynchronous by default, because AI inference takes time and your existing system shouldn't stall waiting for it. It needs to degrade gracefully: if the AI layer goes down, the underlying product should keep working. And it needs observability from day one. Log inputs, outputs, latency, errors. AI systems behave unpredictably in ways that only become visible in production, under real usage, with real data. You cannot improve what you cannot see.

The human-in-the-loop question also doesn't get enough serious attention at the design stage. For high-stakes decisions, anything involving money, safety, access, or irreversible actions, you need a human review step before the AI output takes effect. This isn't sentiment, it's engineering. Automated systems that act on AI outputs without human oversight create legal, reputational, and operational exposure. UnitedHealth learned this the hard way in 2025, when their nH Predict algorithm was found to have a 90% error rate on claims appeals. Nine out of ten AI-generated coverage denials were overturned when a human reviewed them. The class action is ongoing.

On Tools: Match the Pattern, Not the Hype

The tooling landscape has matured considerably in the last two years and the options are more practically usable than they have ever been.

For language-based features, chat, summarisation, search, classification, the foundation models (OpenAI's GPT-4o, Anthropic's Claude, Google's Gemini) accessed via API, with a RAG layer connecting them to your proprietary data using LangChain or LlamaIndex, cover most of what you will need. For on-device or real-time inference, vision tasks, sensor data, anything that needs to run without network connectivity, TensorFlow Lite and Core ML are the right tools. For agentic automation, where AI needs to orchestrate actions across multiple systems rather than just surface insights, LangGraph has become the practical standard. For model training and fine-tuning, PyTorch and Hugging Face if you have the data and infrastructure appetite, the OpenAI fine-tuning API if you want the benefits with less overhead.

The choice of tool should follow from the use case, not the other way around. Too many projects start by selecting a technology and then looking for problems it can solve. That is how you end up with a RAG system answering questions nobody is asking.

Why the "Rebuild Everything" Instinct Is So Expensive

One thing worth saying plainly: the instinct to rebuild everything from scratch is almost always wrong, and it is expensive in ways that take a long time to reveal themselves.

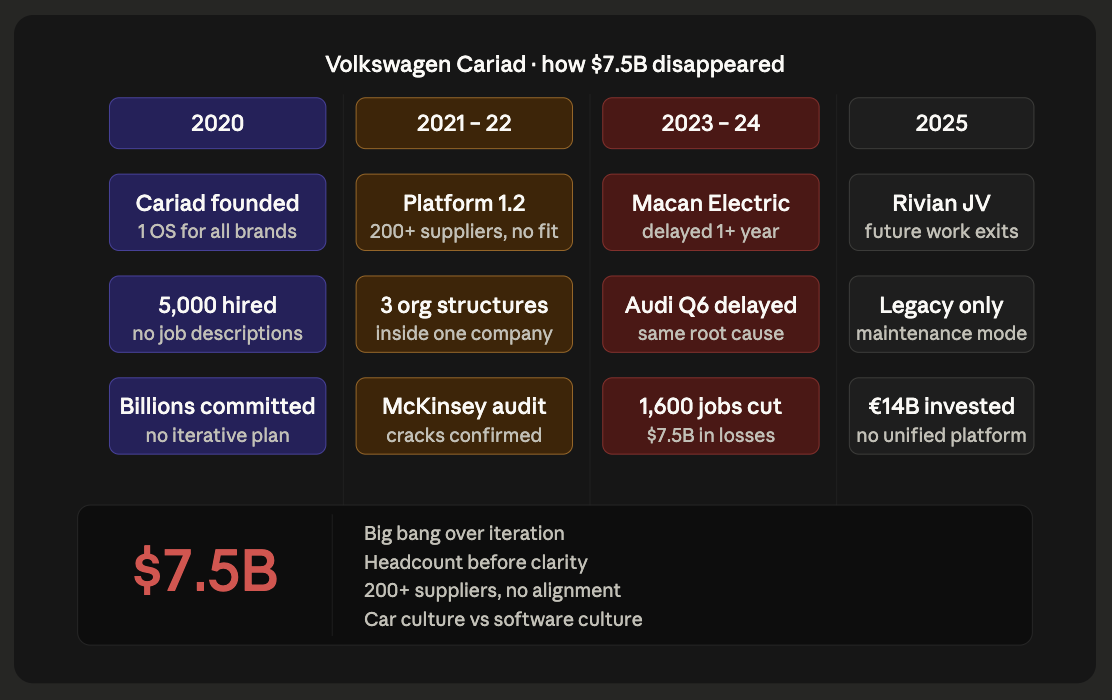

Volkswagen committed to it in 2020. They called the initiative Cariad, a centralised software unit that would build one unified AI-driven operating system for all of their brands. Five thousand engineers. Billions committed. The vision was coherent. The execution became automotive history's most expensive software failure. Platform 1.2 alone had 200 different suppliers. Engineers spent their time managing inter-supplier conflicts rather than building features. Audi, Porsche, and VW each built their own internal structures within what was supposed to be a single team. The Porsche Macan Electric launched over a year late. The Audi Q6 E-Tron was delayed. $7.5 billion in operating losses. 1,600 jobs cut.

One insider described joining Cariad and having no idea what their job was. No job description. So they started building what they knew from their home brand. That is exactly what everyone did. Instead of a lean software company, they created a patchwork of mini-corporations generating PowerPoint decks instead of code.

The lesson isn't that Volkswagen shouldn't have attempted this. It is that the "big bang" approach, transforming everything at once, replacing rather than layering, scaling headcount before scaling clarity, is how ambitious projects become expensive cautionary tales. The companies that are shipping AI in 2026 are the ones that started with one use case, validated it thoroughly, and expanded from there.

Where to Start

If your team is trying to figure out how to add AI to an existing product and you are not sure where to begin, the most valuable first step is an honest architecture assessment. Not a vendor pitch. Not a proof of concept. A clear-eyed read of what your current system can actually support, where the data problems are, and where the highest-value integration points are.

That assessment shapes everything that comes after: the use case you prioritise, the tools you choose, the data work you need to do first, and the integration architecture you build. Getting it right upfront is significantly cheaper than discovering the problems halfway through development.

The software you already have isn't the obstacle. It is the foundation you are building from.

Start here: roro.io/upgrade-your-stack

Roro is a product innovation studio that has helped companies including L'Oréal, Precision Pro Golf, Luxer One, Eyrus, and Hypelist build and integrate AI, mobile, and connected experiences since 2017.

Frequently Asked Questions

1. How long does it take to add AI to an existing product?

A focused integration, such as a recommendation engine, a RAG-powered search feature, or an on-device inference model, can be validated and in production within 6 to 10 weeks. More complex work involving hardware, custom model training, or significant data infrastructure will take longer. The scoping phase, where you identify the use case and audit the data, typically takes 1 to 2 weeks and shapes everything downstream.

2. Do we need to rebuild our existing system to add AI?

In most cases, no. The goal is to add an intelligence layer that connects to your existing data and workflows, not replace the systems your business already depends on. The exceptions are cases where the architecture fundamentally cannot support integration, for example no APIs, no structured data, or no way to pass information to an external system. Even then, a targeted modernisation of the blocking components is usually far less disruptive than a full rewrite.

3. What is the difference between fine-tuning and RAG?

Fine-tuning adjusts the model's weights using your data, making it more fluent in your domain's specific language and patterns. RAG keeps the model unchanged but gives it access to your data at inference time by retrieving relevant documents and passing them as context. RAG is faster to implement, cheaper to maintain, and better for use cases where your data changes frequently. Fine-tuning is better when you need the model to behave in a very specific, consistent way that differs from its default behaviour.

4. How do we know if our data is ready?

Signs it is ready: stored in structured formats, consistent across systems, sufficient volume, and the signals your use case needs are actually captured. Signs it is not: key fields frequently null, data living in spreadsheets or PDFs, different systems using different IDs for the same entities, or historical data captured for reporting rather than analysis. The audit takes about a week. Discovering these problems mid-development takes months.

5. Can AI run on a mobile app without internet?

Yes. On-device inference using TensorFlow Lite or Core ML runs directly on the device with no network dependency. The tradeoff is capability: on-device models are smaller and suited to specific, well-defined tasks rather than open-ended reasoning. For real-time vision, audio processing, or latency-sensitive use cases, this is often the right choice regardless of connectivity.

.png)

.webp)